Back after a very hard week!

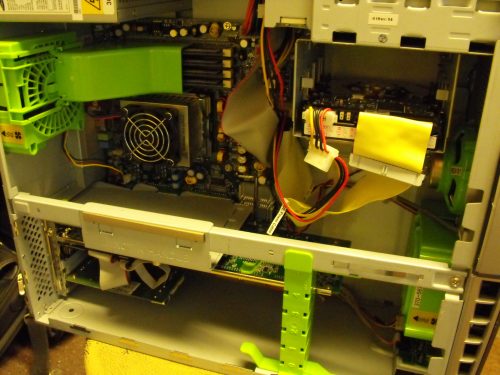

Some of you may have noticed that last week the icebox server was down and unavailable for use. This was actually a result of my boneheadedness in thinking that a 25watt PCI card is some how hot pluggable. Compound this with being sick at the beginning of the week, so that brings us to story time:

As many of you know I have been messing around with the Sun PCi cards (), testing some for a friend, and making others work in my server. While testing, I had been hot plugging the cards but I didn’t know that they weren’t hot pluggable. I guess I should have figured in some way that a card drawing 25 watts from a PCI slot probably isn’t hot pluggable. The interesting thing about it was it actually worked successfully the first time, and it allowed me to fire up a new card in the system, the second time around the kernel crashed and the system rebooted. When the system came back up, the boot archive file had been corrupted along with a couple of config files in the /etc directory.. Not good.

I spent hours working on restoring the boot archive and I was finally able to boot the machine back to life. I had nuked one of my mirrored drives in the process but that was not too big of a deal because I knew that I could just sync them up again after I was all finished. I made a few finishing touches and had the system back on its feet, I then initiated a re-sync of the mirrored drives. It was then I was bit really hard in the behind, it was nearly 6am and the long late hours had caught up with me, and I attempted to move the mounted root file system to the metadevice I had just created after already starting the sync. When setting up your RAID 1 in Solaris the two steps are supposed to be done in reverse of that order. Change your root drive first, reboot, then start syncing your second drive. I decided not only to do the reverse of that, I also forgot the reboot.

As soon as I attempted this operation the kernel panicked and the system reset, only to come back up to a hard drive that would not boot at all, not even into failsafe mode. After plopping the Solaris disc back in the tray and running a the fsck on the root drive my worst nightmare came true, the drive came up as blank. My stunt had wiped both drives of their filesystem headers. Every time I ran fsck it would recover a couple of files into lost+found, the rest of the drive showed up as empty. I needed this drive to work bad, because I wasn’t aware how crucial it was to backup the md.conf file and a couple of other things in /etc and /kernel to be able to successfully restore my RAID 5 array that has all my data near and dear to my heart. I created a script to run FSCK all night and recover files slowly, infinitely looping. I let it run for a full two days, and every time I came up empty with my files, I finally gave and had to start looking for other options.

I then started to use the metainit command to attempt to re-create the array using the -k option to retain all the data on the drives. The problem with this was when I was trying to recover the metadb’s from the raid5 disks I wrote the wrong partition effectively rendering one of the disks useless. Normally this isn’t so bad, its just equivalent to a disc needing replaced and the data is still all available, but since metainit depends on ok disks seemingly to reconstruct the md.conf accordingly I was unable to complete this process. Just when it seemed I was done for I caught a break, apparently from 6am the night before.

Somewhere in the process between getting my system up the first time, and crashing it while attempting to re-create a RAID 1 I managed to tarball the entire 725Gb from my array and store it to one of my SATA backup drives. I was then able to re-create the array and restore the tarball successfully putting everything back the way it was. I never actually lost any web hosting data from this outage, as that and the logs are backed up to a separate disk. I had no regular means of backing up the master array, but now it’s clearer than ever that it really should be taken into consideration.

In my experimentation, I found that creating an array with 1Mb blocks versus the default 32k actually increased performance in my situation considerably. I think that this is because I am using “large” 146Gb disks, and there are 10 of them in my RAID-5 array. With 32K blocks I was looking at 30+ hours to restore from my SATA back to the RAID 5, with 1Mb blocks it was done in 18 hours. Most files that I work with are definitely large in size, so I think the larger block size helps when it comes to that.

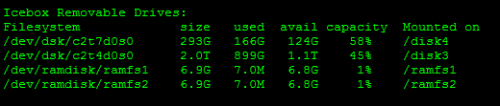

In further experimentation, I thought of something kind of neat and different. Since my server has 32 gigs of Ram available for whatever I want I decided to create a pair of ram disks to compliment the Sun PCi Cards.

This works by creating and running the image from the ram drive, and then you can shut down the PCi card and back up your disk image to hard disk intermittently. This is a great container for inspecting hard drives or programs that may have potential viruses, etc. If any fatal OS changes are made you can just erase the image file from ram disk and restore your last archived copy. Of course this relies on your computer not being turned off on a regular basis, so if you run your PCi card in a distant closet in a giant server this may be the solution for you.

I want to write a program or shell script that will handle changing the configuration files and syncing the images to ramdisk automatically. In testing I believe the speed increase is worth the effort, its pretty funny watching defrag finish in 30 seconds. There are so many things I like about an entire operating system being held in volitle memory I am sure that others may share the same enthusiasm.

In other news…

What else has been going on? Well there are more than a few new arrivals at the shop. More hardware for re-sale. Actually some new old stock Sun stuff which is pretty cool. I also scored a SunBlade 1500 workstation that was fully loaded minus a hard drive. It came with a 1.5GHz CPU and 8Gb of Ram, and a XVR-600 Graphics card. Not bad!

I also picked up a pair of SunFire V120’s. I will part ways with one and keep the other, they are identical systems. I figure one will make a great backup server to compliment the downtimes the massive V880 has from time to time (oops) creating a bit of a failsafe. This also brings me to the next part 🙂

I picked up a couple of 20/40Gb Sun Tape Drives. These drives are in great shape, I think is just the ticket when it comes to how I want to orchestrate my webserver failover plan. Since I have the two drives I will keep one at my co location with the V120 on standby duty, and one attached to the V880 on active duty making nightly backups. In the event of an outage, the V120 will park all the web pages to a maintenance page until the previous nights backups are restored from tape which in my case is just a few hours within the downtime.

Seems like a pretty solid plan, and I have a good way to catalog my data by organizing them onto tape. At least that way I don’t need to lug around the really really old stuff I rarely use. The possibilities are endless for the moment, so I’ll just enjoy the thoughts and have fun.

These V120s still have good place in today’s business world. People forget that these severs supported thousands and thousands of simultaneous connections, whether it be web, or transactional they chew through it. I think if I work on a package hard enough this hardware will still easily out sell new hardware. Thats just my opinion though 🙂

Web-hosting related:

There has been much additional interest in hosting websites for people lately. Lets say that you are a business and you want to host a small website. In most cases this can be a huge ordeal, especially if you are working with a web developer, but I will start with asking you this, can you use Facebook? If so, you are literally a few hours of face-booking away from creating your own website. I have been as a bit of an experiment setting up the framework for people and turning them loose on creating their own websites. Imagine for a second that all the hosting and framework was taken care of, but all you would need to use is use something as easy as Microsoft word to create and post content to your website. Instead of relying on a hire web developers insight you can create you own site the way that you want to. Granted there are always situations that arise where expert web development is required.. However, the vast majority of small businesses that I run into that need sites don’t really need anything spectacular, just more of an e-business card.There isn’t much need of all of this hosting and hiring a web developer nonsense, much cost can be saved investing a few hours into creating a website for your business, and you don’t even need to know a single piece of code.

I am pretty convinced the software I have been working with as a content management system is incredibly easy to use. I have had absolutely no issues working on my website since I moved to the WordPress CMS. (If you’re not sure what a CMS is, it stands for Content Management System, and is the “framework” used for constructing a website, blog, or other page.)

At least hosting from my server, it’s $10 a month, and I take care of upgrading any web software if needed, and take care of all server maintenance. One thing I do encourage though, is that all small businesses host its own web server from its own internet connection. I know that many people out there may laugh, but in the small business scheme of things is it really justified to pay lots of money to have a website provided to a customer base that isn’t frequented as often as say HP, DELL, or Facebook? Granted one would get an uptime guarantee, and not have to deal with the cost and maintenance of server hardware but times are changing a little bit since yesteryear.

Computers are available more readily than ever before, they cost less than anyone would have conceivably imagined in the 1980s. To add to this, the dot com bubble brought with it further price decreases in server hardware, even causing a company to go under. The result is interesting, I can get a computer that originally cost $150k + for pennies on the dollar. In my case it was a total of $300 for a computer that 10 years ago sold for that 150k price tag. Another $300 in hardware goodies and I had a server that I know would easily last another 10 years. For those who aren’t sure what I’m quite talking about yet the most easily referred to example is Sun Microsystems. Their overall goal was to provide a powerful and reliable hardware and as a result companies had to pay a fairly hefty price and that they did. When the dotcom bubble burst and everyone went under this once expensive hardware wasn’t so expensive anymore, but it definitely didn’t lose the reliability, or special design characteristics that set it apart from its competition.

This allowed me an opportunity to build the server that I have today, not only do I have the chance to mess around with something that normally required a fancy higher education, but I can create an avenue to provide more inexpensive services to those who don’t fit the normal web hosting bill. I have been experimenting for years with hosting other small business sites, and I have achieved a pretty good uptime being down only a few days out of last year. That’s pretty good considering the experiments that I put my server through (such as the SunPCI project) they are definitely more resilient than they are treated in a normal IT environment. (which is great, but just keep on thinking they’re fragile or it’ll bite you in the ass once you get ballsy)

You know, I think I will do a video on this, it makes for an interesting discussion to have so why not.

There is much more to come, I have a video that I need to put a final finishing touch on and then it’s going up. I was getting ready for my buddy’s wedding last week and spent most of the weekend out there. It was a blast, but not I can finally resume the regularly scheduled program.